Google BigQuery 101: Simple Queries to Practice in SQL

Enhance your skills in GBQ by aggregating data, managing dates, strings, and exploring subsets through practice with demo data

The more information a business accumulates, the more questions about where to store it are there. If you don’t have the ability or desire to maintain your own servers, or simply if you don’t want to - Google BigQuery (GBQ) can help you out.

BigQuery provides fast, cost-effective, and scalable storage for working with big amount of data, and it allows you to write queries using SQL-like syntax as well as standard and user-defined functions.

In this article, we’ll take a look at the main BigQuery functions and show the possibilities using specific examples with SQL queries you can run. You’ll learn how to write basic queries and test them on demo data.

Google BigQuery's Leading Features

- Effortless Scalability: Google BigQuery excels in handling vast datasets with ease, thanks to its use of massively parallel processing and a robust, scalable storage system. This ensures smooth performance and scalability for its users.

- Zero Maintenance Hassle: Google Bigquery is a part of the Google Cloud Platform and it's fully managed, meaning you don't need to maintain any infrastructure.

- Simplified Management: With its serverless architecture, Google BigQuery eliminates the complexities of maintenance and setup tasks such as managing servers or virtual machines, adjusting sizes, and overseeing memory. This enables users to prioritize data analysis over managing infrastructure.

- Advanced AI and Machine Learning Capabilities: BigQuery integrates cutting-edge AI and machine learning technologies, featuring an automatic machine learning tool that simplifies the creation of accurate models for predictive analytics.

- Optimized Storage Solution: Utilizing a columnar storage approach, Google BigQuery offers an efficient way to store and manage large quantities of data. This method enhances storage efficiency and accelerates data retrieval times compared to traditional row-based storage systems.

Here is the article where we specify why Google BigQuery is the best data warehouse specifically for storing and processing marketing data.

What is SQL, and What Dialects does BigQuery Support

Structured Query Language (SQL) allows you to retrieve data from, add data to, and modify data in large arrays. Google BigQuery supports two SQL dialects: Standard SQL and Legacy SQL.

Which dialect to choose depends on your preferences, but Google recommends using Standard SQL for these benefits:

- Flexibility and functionality for nested and repeating fields

- Support for the DML and DDL languages, allowing you to change data in tables as well as manage tables and views in GBQ

- Faster processing of large amounts of data compared to Legacy SQL

- Support for all future BigQuery updates

You can learn more about the dialect differences in the BigQuery documentation.

See also: What are the advantages of the new Standard SQL dialect of Google BigQuery over Legacy SQL, and what business tasks can you solve with it.

By default, Google BigQuery queries run on Legacy SQL.

You can switch to Standard SQL in several ways:

- In the BigQuery interface, in the query editing window, select Query Settings Options and Advanced options section and remove the checkmark next to Legacy under SQL Dialect:

- Before querying, add the line #standardSQL and start your query with a new line:

Sublanguages in SQL

SQL encompasses different subsets of commands, each serving a unique purpose in database management and interaction:

- Query Statements (DQL): Serve as the main tool for data analysis within BigQuery, allowing for the scanning and computation of results from tables or expressions.

- Data Definition Language (DDL): Facilitates the creation and alteration of database objects like tables, views, functions, and row-level access policies.

- Data Manipulation Language (DML): Provides the means to modify data within BigQuery tables through updates, inserts, and deletes.

- Data Control Language (DCL): Offers control over BigQuery system resources, managing access and capacity.

- Transaction Control Language (TCL): Supports the management of transactions, particularly for modifying data.

SQL Syntax Notation Rules

Here specific syntax notation rules are employed to clarify how commands and queries should be structured:

- Square Brackets [ ]: Indicate that a clause is optional. You may include it in your query if needed, but it's not mandatory.

- Curly Braces with Vertical Bars { a | b | c }: Denote a choice between options. You should select one of the options enclosed within the braces.

- Ellipsis ...: Signify that the item immediately before the ellipsis can be repeated. This allows for the inclusion of multiple instances of the preceding element.

- Red Square Brackets [ ]: Highlight elements that are mandatory and form an essential part of the syntax. These items must be included in your query.

- Red Curly Braces { }: Similar to the regular curly braces but colored red to emphasize that the enclosed elements are compulsory parts of the command or query.

- Red Vertical Bar|: When colored red, the vertical bar indicates a mandatory choice within the syntax. It separates required options, one of which must be selected for the command to be valid.

Where to Start From in BigQuery

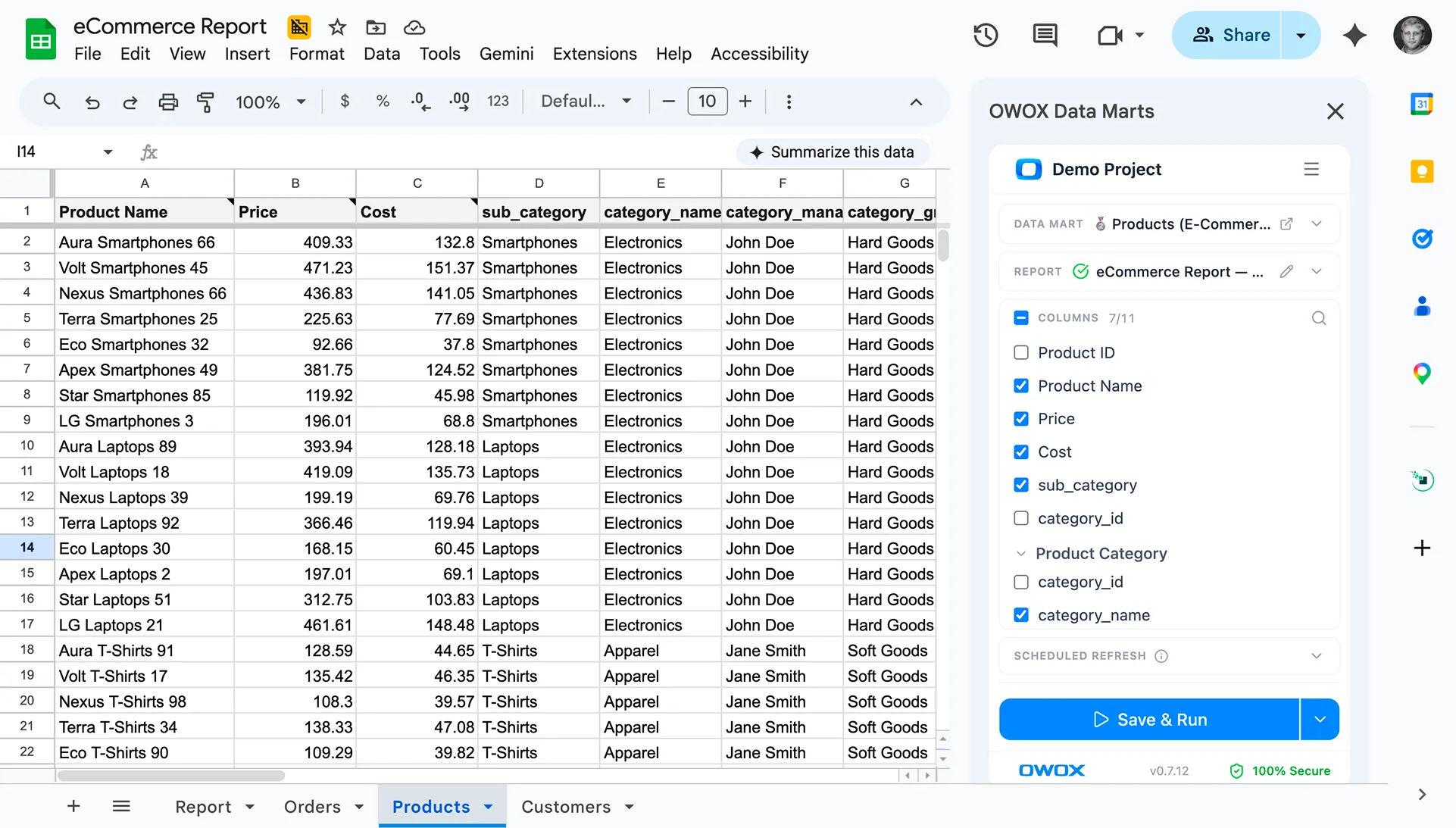

So you can practice and run queries with us, we’ve prepared a table with demo data.

To get started, download your demo data table and upload it to your Google BigQuery project. The easiest and free way to do this is with the OWOX Reports.

- Open Google Sheets and install the OWOX Reports.

- Open the table you downloaded that contains demo data and select OWOX Reports –> Upload Data to BigQuery:

- In the window that opens, choose your Google BigQuery project data set, and think up a name for the table in which the loaded data will be stored.

- Specify a format for the loaded data (as shown in the screenshot):

If you don’t have a project in Google BigQuery, you can create one here.

To do this, you’ll need an active billing account in the Google Cloud Platform. Don’t let it scare you that you need to link a bank card: you won’t be charged anything without your knowledge. In addition, when you register, you’ll receive $300 for 12 months that you can spend on data storage and processing.

Legacy SQL vs Standard SQL in BigQuery

Before talking about Google BigQuery features, let’s remember what basic queries look like in both the Legacy SQL and Standard SQL dialects:

Select fields from the table

- Legacy SQL: SELECT field1, field2

- Standard SQL: SELECT field1, field2

Select a table from which to choose fields

- Legacy SQL: FROM [projectID:dataSet.tableName]

- Standard SQL: FROM `projectID.dataSet.tableName`

Select the parameter by which to filter values

- Legacy SQL: WHERE field1 = value

- Standard SQL: WHERE field1 = value

Select fields by which to group results

- Legacy SQL: GROUP BY field1, field2

- Standard SQL: GROUP BY field1, field2

Select how to order results

- Legacy SQL: ORDER BY field1 ASC (ascending) or DESC (descending)

- Standard SQL: ORDER BY field1 ASC (ascending) or DESC (descending)

Google BigQuery Features

When building queries, you’ll most frequently use aggregate, date, string, and window functions. Let’s take a closer look at each of these groups of functions.

Here is how to start working with BigQuery, how to create a dataset and tables and configure the importing of data to Google BigQuery.

Aggregate functions

Bigquery Aggregate functions provide summary values for an entire table. For example, you can use them to calculate the average check size or total revenue per month, or you can use them to select the segment of users who made the maximum number of purchases.

These are the most popular Bigquery aggregate functions:

AVG()

- Legacy SQL: AVG()

- Standard SQL: AVG

- What it does: Gets the average of non-NULL values. In Legacy SQL, it calculates the average within a group of rows based on a numeric expression, excluding NULL values.

MAX(field)

- Legacy SQL: MAX(field)

- Standard SQL: MAX(field)

- What it does: Returns the maximum value from the field column. In Standard SQL, it retrieves the maximum non-NULL value.

MIN(field)

- Legacy SQL: MIN(field)

- Standard SQL: MIN(field)

- What it does: Returns the minimum value from the field column. In Standard SQL, it retrieves the minimum non-NULL value.

SUM(field)

- Legacy SQL: SUM(field)

- Standard SQL: SUM(field)

- What it does: Returns the sum of values from the field column (numerical data types only). In Standard SQL, it sums non-NULL values.

COUNT(field)

- Legacy SQL: COUNT(field)

- Standard SQL: COUNT(field)

- What it does: Returns the total number of values (NULL and non-NULL). In Standard SQL, it counts rows or expressions evaluating to non-NULL values.

EXACT_COUNT_DISTINCT(field)

- Legacy SQL: EXACT_COUNT_DISTINCT(field)

- Standard SQL: COUNT([DISTINCT] field)

- What it does: Returns the number of unique rows in the field column.

For a list of all aggregate functions, see the Legacy SQL and Standard SQL documentation.

Let’s look at the demo data to see how these functions work. We can calculate the average revenue for transactions, purchases for the highest and lowest amounts, total revenue, total transactions, and the number of unique transactions (to check if purchases were duplicated). To do this, we’ll write a query in which we specify the name of our Google BigQuery project, the dataset, and the table.

#legacy SQL

SELECT

AVG(revenue) as average_revenue,

MAX(revenue) as max_revenue,

MIN(revenue) as min_revenue,

SUM(revenue) as whole_revenue,

COUNT(transactionId) as transactions,

EXACT_COUNT_DISTINCT(transactionId) as unique_transactions

FROM

[owox-analytics:t_kravchenko.Demo_data]#standard SQL

SELECT

AVG(revenue) as average_revenue,

MAX(revenue) as max_revenue,

MIN(revenue) as min_revenue,

SUM(revenue) as whole_revenue,

COUNT(transactionId) as transactions,

COUNT(DISTINCT(transactionId)) as unique_transactions

FROM

`owox-analytics.t_kravchenko.Demo_data`As a result, we’ll get the following:

You can check the results of these calculations in the original table with demo data using standard Google Sheets functions (SUM, AVG, and others) or using pivot tables.

As you can see from the screenshot above, the number of transactions and unique transactions is different. This suggests there are two transactions in our table with the same transactionId:

If you’re interested in unique transactions, use a function that counts unique strings. Alternatively, you can group data using the GROUP BY function to get rid of duplicates before applying the aggregate function.

Date functions

These functions allow you to process dates: change their format, select the necessary field (day, month, or year), or shift the date by a certain interval.

They may be useful when:

- converting dates and times from different sources to a single format to set up advanced analytics

- creating automatically updated reports or trigger mailings (for example, when you need data for the last two hours, week, or month)

- creating cohort reports in which it’s necessary to obtain data for a period of days, weeks, or months

These are the most commonly used Bigquery date functions:

CURRENT_DATE()

- Legacy SQL: CURRENT_DATE()

- Standard SQL: CURRENT_DATE()

- Description: Returns the current date in the format

%YYYY-%MM-%DD

DATE(timestamp)

- Legacy SQL: DATE(timestamp)

- Standard SQL: DATE(timestamp)

- Description: Returns the date in the format

%Y-%m-%d. In Standard SQL, constructs a DATE value.

DATE_ADD()

- Legacy SQL: DATE_ADD()

- Standard SQL: DATE_ADD

- Description: Adds the specified interval to a TIMESTAMP data type (Legacy SQL) or DATE value (Standard SQL).

DATE_ADD(timestamp, -interval, interval_units)

- Legacy SQL: DATE_ADD(timestamp, -interval, interval_units)

- Standard SQL: DATE_SUB(timestamp, INTERVAL interval interval_units)

- Description: Returns the timestamp date, decreasing it by the specified interval.

DATEDIFF()

- Legacy SQL: DATEDIFF()

- Standard SQL: DATE_DIFF()

- Description: Returns the number of days between two TIMESTAMP values (Legacy SQL) or the number of intervals between two DATE values (Standard SQL).

DAY(timestamp)

- Legacy SQL: DAY(timestamp)

- Standard SQL: EXTRACT(DAY FROM timestamp)

- Description: Returns the day from the timestamp date. Values range from 1 to 31.

MONTH(timestamp)

- Legacy SQL: MONTH(timestamp)

- Standard SQL: EXTRACT(MONTH FROM timestamp)

- Description: Returns the month number from the timestamp date. Values range from 1 to 12.

YEAR(timestamp)

- Legacy SQL: YEAR(timestamp)

- Standard SQL: EXTRACT(YEAR FROM timestamp)

- Description: Returns the year from the timestamp date.

For a list of all Bigquery date functions, see the Legacy SQL and Standard SQL documentation.

Let’s take a look at our demo data to see how each of these functions works. For example, we’ll get the current date, turn the date from the original table into the format % YYYY -% MM-% DD, take it away, and add one day to it. Then, we’ll calculate the difference between the current date and the date from the source table and break the current date into separate year, month, and day fields. To do this, you can copy the sample queries below and replace the project name, dataset, and data table with your own.

#legacy SQL

SELECT

CURRENT_DATE() AS today,

DATE( date_UTC ) AS date_UTC_in_YYYYMMDD,

DATE_ADD( date_UTC,1, 'DAY') AS date_UTC_plus_one_day,

DATE_ADD( date_UTC,-1, 'DAY') AS date_UTC_minus_one_day,

DATEDIFF(CURRENT_DATE(), date_UTC ) AS difference_between_date,

DAY( CURRENT_DATE() ) AS the_day,

MONTH( CURRENT_DATE()) AS the_month,

YEAR( CURRENT_DATE()) AS the_year

FROM

[owox-analytics:t_kravchenko.Demo_data]#standard SQL

SELECT

today,

date_UTC_in_YYYYMMDD,

DATE_ADD( date_UTC_in_YYYYMMDD, INTERVAL 1 DAY) AS date_UTC_plus_one_day,

DATE_SUB( date_UTC_in_YYYYMMDD,INTERVAL 1 DAY) AS date_UTC_minus_one_day,

DATE_DIFF(today, date_UTC_in_YYYYMMDD, DAY) AS difference_between_date,

EXTRACT(DAY FROM today ) AS the_day,

EXTRACT(MONTH FROM today ) AS the_month,

EXTRACT(YEAR FROM today ) AS the_year

FROM (

SELECT

CURRENT_DATE() AS today,

DATE( date_UTC ) AS date_UTC_in_YYYYMMDD

FROM

`owox-analytics.t_kravchenko.Demo_data`)After running the query, you’ll receive this report:

See also Examples of reports that can be built using SQL queries on data in Google BigQuery and what unique metrics you can supplement Google Analytics data with OWOX BI.

String functions

Bigquery String functions allow you to generate a string, select and replace substrings, and calculate the length of a string and the index sequence of the substring in the original string. For example, with string functions, you can:

- filter a report with UTM tags that are passed to the page URL

- bring data into a single format if the source and campaign names are written in different registers

- replace incorrect data in a report (for example, if the campaign name is misprinted)

These are the most popular functions for working with strings:

CONCAT

- Legacy SQL: CONCAT('str1', 'str2') or 'str1' + 'str2'

- Standard SQL: CONCAT('str1', 'str2')

- Description: Concatenates 'str1' and 'str2' into one string. If any input argument is NULL, the function will return NULL.

CONTAINS

- Legacy SQL: expr CONTAINS 'str'

- Standard SQL: REGEXP_CONTAINS(value, regexp)

- Description: Returns true if the expression "expr" contains the specified string (Legacy SQL) or partially matches the regular expression (Standard SQL). Returns an error for invalid regex.

LENGTH

- Legacy SQL: LENGTH('str')

- Standard SQL: CHAR_LENGTH('str') or CHARACTER_LENGTH('str')

- Description: Returns the length of the string 'str' (number of characters).

SUBSTR

- Legacy SQL: SUBSTR('str', index [, max_len])

- Standard SQL: SUBSTR(value, position [, length])

- Description: Returns a substring of length max_len starting with an index character from string 'str'.

LOWER

- Legacy SQL: LOWER('str')

- Standard SQL: LOWER('str')

- Description: Converts all characters in the string 'str' to lowercase.

UPPER

- Legacy SQL: UPPER('str')

- Standard SQL: UPPER('str')

- Description: Converts all characters in the string 'str' to uppercase.

INSTR

- Legacy SQL: INSTR('str1', 'str2')

- Standard SQL: STRPOS('str1', 'str2')

- Description: Returns the index of the first occurrence of string 'str2' in 'str1'; otherwise, returns 0.

REPLACE

- Legacy SQL: REPLACE('str1', 'str2', 'str3')

- Standard SQL: REPLACE('str1', 'str2', 'str3')

- Description: Substitutes all occurrences of 'str2' within 'str1' with 'str3'.

You can learn more about all Bigquery string functions in the Legacy SQL and Standard SQL documentation.

Let’s look at the demo data to see how to use the described functions. Suppose we have three separate columns that contain day, month, and year values:

Working with a date in this format isn’t very convenient, so we can combine the values into one column. To do this, use the SQL queries below and remember to substitute the name of your project, dataset, and table in Google BigQuery.

#legacy SQL

SELECT

CONCAT(the_day,'-',the_month,'-',the_year) AS mix_string1

FROM (

SELECT

31 AS the_day,

12 AS the_month,

2018 AS the_year

FROM

[owox-analytics:t_kravchenko.Demo_data])

GROUP BY

mix_string1#standard SQL

SELECT

CONCAT(the_day,'-',the_month,'-',the_year) AS mix_string1

FROM (

SELECT

31 AS the_day,

12 AS the_month,

2018 AS the_year

FROM

owox-analytics.t_kravchenko.Demo_data)

GROUP BY

mix_string1After running the query, we receive the date in one column:

Often, when you download a page on a website, the URL records the values of the variables the user has chosen. This can be a payment or delivery method, transaction number, index of the physical store in which the buyer wants to pick up the item, etc. Using a SQL query, you can select these parameters from the page address. Consider two examples of how and why you might do this.

Example 1. Suppose we want to know the number of purchases in which users pick up goods from physical stores. To do this, we need to calculate the number of transactions sent from pages in the URL that contain a substring shop_id (an index for a physical store). We can do this with the following queries:

#legacy SQL

SELECT

COUNT(transactionId) AS transactions,

check

FROM (

SELECT

transactionId,

page CONTAINS 'shop_id' AS check

FROM

[owox-analytics:t_kravchenko.Demo_data])

GROUP BY

check#standard SQL

SELECT

COUNT(transactionId) AS transactions,

check1,

check2

FROM (

SELECT

transactionId,

REGEXP_CONTAINS( page, 'shop_id') AS check1,

page LIKE '%shop_id%' AS check2

FROM

`owox-analytics.t_kravchenko.Demo_data`)

GROUP BY

check1,

check2From the resulting table, we see that 5502 transactions (check = true) were sent from pages containing shop_id:

Example 2. You’ve assigned a delivery_id to each delivery method, and you specify the value of this parameter in the page URL. To find out which delivery method the user has chosen, you need to select the delivery_id in a separate column.

We can use the following queries for this:

#legacy SQL

SELECT

page_lower_case,

page_length,

index_of_delivery_id,

selected_delivery_id,

REPLACE(selected_delivery_id, 'selected_delivery_id=', '') as delivery_id

FROM (

SELECT

page_lower_case,

page_length,

index_of_delivery_id,

SUBSTR(page_lower_case, index_of_delivery_id) AS selected_delivery_id

FROM (

SELECT

page_lower_case,

LENGTH(page_lower_case) AS page_length,

INSTR(page_lower_case, 'selected_delivery_id') AS index_of_delivery_id

FROM (

SELECT

LOWER( page) AS page_lower_case,

UPPER( page) AS page_upper_case

FROM

[owox-analytics:t_kravchenko.Demo_data])))

ORDER BY

page_lower_case ASC#standard SQL

SELECT

page_lower_case,

page_length,

index_of_delivery_id,

selected_delivery_id,

REPLACE(selected_delivery_id, 'selected_delivery_id=', '') AS delivery_id

FROM (

SELECT

page_lower_case,

page_length,

index_of_delivery_id,

SUBSTR(page_lower_case, index_of_delivery_id) AS selected_delivery_id

FROM (

SELECT

page_lower_case,

CHAR_LENGTH(page_lower_case) AS page_length,

STRPOS(page_lower_case, 'selected_delivery_id') AS index_of_delivery_id

FROM (

SELECT

LOWER( page) AS page_lower_case,

UPPER( page) AS page_upper_case

FROM

`owox-analytics.t_kravchenko.Demo_data`)))

ORDER BY

page_lower_case ASCAs a result, we get a table like this in Google BigQuery:

Window functions

These functions are similar to the aggregate functions we discussed above. The main difference is that Bigquery window functions don’t carry out calculations on the entire set of data selected using the query but only on part of that data — a subset or window.

Using window functions, you can aggregate data in a group section without using the JOIN function to combine multiple queries. For example, you can calculate the average revenue per ad campaign or the number of transactions per device. By adding another field to the report, you can easily find out, for example, the share of revenue from an ad campaign on Black Friday or the share of transactions made from a mobile application.

Together with each function in the query, you must spell out the OVER expression that defines the window boundaries. OVER contains three components that you can work with:

- PARTITION BY — Defines the characteristic by which you divide the original data into subsets, such as clientId or DayTime

- ORDER BY — Defines the order of rows in a subset, such as hour DESC

- WINDOW FRAME — Allows you to process rows within a subset of a specific feature (for example, only the five rows before the current row)

In this table, we’ve collected the most frequently used window functions:

AVG, COUNT, MAX, MIN, SUM

- Legacy SQL:

- AVG(field)

- COUNT(field)

- COUNT(DISTINCT field)

- MAX()

- MIN()

- SUM()

- Standard SQL:

- AVG([DISTINCT] field)

- COUNT(field)

- COUNT([DISTINCT] field)

- MAX(field)

- MIN(field)

- SUM(field)

- Description: Returns the average, count, maximum, minimum, or total value from the field column within the subset. DISTINCT is used to calculate only unique (non-repeating) values.

DENSE_RANK

- Legacy SQL: DENSE_RANK()

- Standard SQL: DENSE_RANK()

- Description: Returns the row number within a subset.

FIRST_VALUE

- Legacy SQL: FIRST_VALUE(field)

- Standard SQL: FIRST_VALUE(field[{RESPECT | IGNORE} NULLS])

- Description: Returns the first value from the field column within a subset. RESPECT or IGNORE NULLS specifies whether to include or ignore NULL strings.

LAST_VALUE

- Legacy SQL: LAST_VALUE(field)

- Standard SQL: LAST_VALUE(field[{RESPECT | IGNORE} NULLS])

- Description: Returns the last value from the field column within a subset. RESPECT or IGNORE NULLS specifies whether to include or ignore NULL strings.

LAG

- Legacy SQL: LAG(field)

- Standard SQL: LAG(field[, offset [, default_expression]])

- Description: Returns the value of the previous row relative to the current row in the subset. Offset specifies the number of rows to move down. Default_expression is returned if no required value exists in the subset.

LEAD

- Legacy SQL: LEAD(field)

- Standard SQL: LEAD(field[, offset [, default_expression]])

- Description: Returns the value of the next row relative to the current row in the subset. Offset specifies the number of rows to move up. Default_expression is returned if no required value exists in the subset.

You can see a list of all aggregate analytic functions and navigation functions in the documentation for Legacy SQL and Standard SQL.

Example 1. Let’s say we want to analyze the activity of customers during working and non-working hours. To do this, we need to divide transactions into two groups and calculate the metrics of interest:

- Group 1 — Purchases during working hours from 9:00 to 18:00

- Group 2 — Purchases after hours from 00:00 to 9:00 and from 18:00 to 23:59

In addition to working and non-working hours, another variable for forming a window is clientId. That is, for each user, we’ll have two windows:

Window 1

- Client ID: clientId 1

- DayTime: working hours

Window 2

- Client ID: clientId 2

- DayTime: non-working hours

Window 3

- Client ID: clientId 3

- DayTime: working hours

Window 4

- Client ID: clientId 4

- DayTime: non-working hours

Window N

- Client ID: clientId N

- DayTime: working hours

Window N+1

- Client ID: clientId N+1

- DayTime: non-working hours

Let’s use demo data to calculate the average, maximum, minimum, and total revenue, total number of transactions, and number of unique transactions per user during working and non-working hours. The requests below will help us do this.

#legacy SQL

SELECT

date,

clientId,

DayTime,

avg_revenue,

max_revenue,

min_revenue,

sum_revenue,

transactions,

unique_transactions

FROM (

SELECT

date,

clientId,

DayTime,

AVG(revenue) OVER (PARTITION BY date, clientId, DayTime) AS avg_revenue,

MAX(revenue) OVER (PARTITION BY date, clientId, DayTime) AS max_revenue,

MIN(revenue) OVER (PARTITION BY date, clientId, DayTime) AS min_revenue,

SUM(revenue) OVER (PARTITION BY date, clientId, DayTime) AS sum_revenue,

COUNT(transactionId) OVER (PARTITION BY date, clientId, DayTime) AS transactions,

COUNT(DISTINCT(transactionId)) OVER (PARTITION BY date, clientId, DayTime) AS unique_transactions

FROM (

SELECT

date,

date_UTC,

clientId,

transactionId,

revenue,

page,

hour,

CASE

WHEN hour>=9 AND hour<=18 THEN 'working hours'

ELSE 'non-working hours'

END AS DayTime

FROM

[owox-analytics:t_kravchenko.Demo_data]))

GROUP BY

date,

clientId,

DayTime,

avg_revenue,

max_revenue,

min_revenue,

sum_revenue,

transactions,

unique_transactions

ORDER BY

transactions DESC#standard SQL

SELECT

date,

clientId,

DayTime,

avg_revenue,

max_revenue,

min_revenue,

sum_revenue,

transactions,

unique_transactions

FROM (

SELECT

date,

clientId,

DayTime,

AVG(revenue) OVER (PARTITION BY date, clientId, DayTime) AS avg_revenue,

MAX(revenue) OVER (PARTITION BY date, clientId, DayTime) AS max_revenue,

MIN(revenue) OVER (PARTITION BY date, clientId, DayTime) AS min_revenue,

SUM(revenue) OVER (PARTITION BY date, clientId, DayTime) AS sum_revenue,

COUNT(transactionId) OVER (PARTITION BY date, clientId, DayTime) AS transactions,

COUNT(DISTINCT(transactionId)) OVER (PARTITION BY date, clientId, DayTime) AS unique_transactions

FROM (

SELECT

date,

date_UTC,

clientId,

transactionId,

revenue,

page,

hour,

CASE

WHEN hour>=9 AND hour<=18 THEN 'working hours'

ELSE 'non-working hours'

END AS DayTime

FROM

`owox-analytics.t_kravchenko.Demo_data`))

GROUP BY

date,

clientId,

DayTime,

avg_revenue,

max_revenue,

min_revenue,

sum_revenue,

transactions,

unique_transactions

ORDER BY

transactions DESCLet’s see what happens as a result using the example of the user with clientId 102041117.1428132012.

In the original table for this user, we have the following data:

By running the query, we receive a report that contains the average, minimum, maximum, and total revenue from this user, as well as the user’s total number of transactions. As you can see in the screenshot below, both transactions were made by the user during working hours:

Example 2. Now for a more complicated task:

- Put sequence numbers for all transactions in the window depending on the time of their execution. Recall that we define the window by user and working/non-working time slots.

- Report the revenue of the next/previous transaction (relative to the current) within the window.

- Display the revenue of the first and last transactions in the window.

To do this, we’ll use the following queries:

#legacy SQL

SELECT

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hour

FROM (

SELECT

date,

clientId,

DayTime,

hour,

DENSE_RANK() OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS rank,

revenue,

LEAD( revenue, 1) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS lead_revenue,

LAG( revenue, 1) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS lag_revenue,

FIRST_VALUE(revenue) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS first_revenue_by_hour,

LAST_VALUE(revenue) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS last_revenue_by_hour

FROM (

SELECT

date,

date_UTC,

clientId,

transactionId,

revenue,

page,

hour,

CASE

WHEN hour>=9 AND hour<=18 THEN 'working hours'

ELSE 'non-working hours'

END AS DayTime

FROM

[owox-analytics:t_kravchenko.Demo_data]))

GROUP BY

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hour

ORDER BY

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hour#standard SQL

SELECT

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hour

FROM (

SELECT

date,

clientId,

DayTime,

hour,

DENSE_RANK() OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS rank,

revenue,

LEAD( revenue, 1) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS lead_revenue,

LAG( revenue, 1) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS lag_revenue,

FIRST_VALUE(revenue) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS first_revenue_by_hour,

LAST_VALUE(revenue) OVER (PARTITION BY date, clientId, DayTime ORDER BY hour) AS last_revenue_by_hour

FROM (

SELECT

date,

date_UTC,

clientId,

transactionId,

revenue,

page,

hour,

CASE

WHEN hour>=9 AND hour<=18 THEN 'working hours'

ELSE 'non-working hours'

END AS DayTime

FROM

`owox-analytics.t_kravchenko.Demo_data`))

GROUP BY

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hour

ORDER BY

date,

clientId,

DayTime,

hour,

rank,

revenue,

lead_revenue,

lag_revenue,

first_revenue_by_hour,

last_revenue_by_hourWe can check the results of the calculations using the example of a user we already know: clientId 102041117.1428132012:

From the screenshot above, we can see that:

- the first transaction was at 15:00, and the second transaction was at 16:00

- after the transaction at 15:00, there was a transaction at 16:00 with revenue of 25066 (column lead_revenue)

- before the transaction at 16:00, there was a transaction at 15:00 with revenue of 3699 (column lag_revenue)

- the first transaction within the window was at 15:00, and the revenue for this transaction was 3699 (column first_revenue_by_hour)

- the query processes the data line by line, so for the transaction in question, the last transaction in the window will be itself, and the values in the columns last_revenue_by_hour and revenue will be the same

Helpful articles about Google BigQuery:

- Top 6 BigQuery Visualization Tools

- How to Upload Data to Google BigQuery

- How to Upload Raw Data from Google Ads to Google BigQuery

- Google BigQuery ↔ Google Sheets Connector

- Automate Reports in Google Sheets Using Data from Google BigQuery

- Automate reports in Looker Studio Based on Data from Google BigQuery

If you want to collect unsampled data from your website in Google BigQuery but don’t know where to start, book a demo. We’ll tell you about all the possibilities you get with BigQuery and OWOX.

Key Takeaways

In this article, we’ve looked at the most popular groups of functions: aggregate, date, string, and window. However, Google BigQuery has many more useful functions, including:

- casting functions that allow you to convert data to a specific format

- table wildcard functions that allow you to access multiple tables in a dataset

- regular expression functions that allow you to describe the model of a search query and not its exact value

We’ll definitely write about these functions on our blog. In the meantime, you can try out all the functions described in this article using our demo data.

Frequently asked questions

Google BigQuery is a powerful cloud-based data warehouse that enables fast, SQL-like queries against large datasets. Marketers use it to analyze customer behavior, campaign performance, and sales data in real-time, helping to make informed decisions, optimize marketing strategies, and improve ROI through data-driven insights.

Google BigQuery offers scalability, speed, and cost-effectiveness for marketing analysis. Its ability to handle massive datasets with ease allows marketers to perform complex analyses and generate insights quickly. Additionally, its integration with various data sources and tools streamlines data analysis workflows, enhancing the efficiency of marketing strategies.

Legacy SQL refers to Google BigQuery's older SQL dialect, which has some Google-specific syntax and functions. Standard SQL, on the other hand, adheres more closely to the ANSI SQL standard, offering broader compatibility with traditional SQL queries. Standard SQL is recommended for new projects due to its predictability and consistency.

Legacy table SQL in the context of Google BigQuery refers to the SQL syntax used to query tables created or managed under the legacy SQL model. This syntax differs slightly from standard SQL, particularly in how it handles table partitioning, table decorators, and specific functions. Legacy tables and their SQL syntax are primarily maintained for backward compatibility.

Structured Query Language (SQL) is a language for working with databases. It allows you to retrieve data from, add data to, and modify data in large data arrays. Google BigQuery supports two SQL dialects: Standard SQL and the outdated Legacy SQL.

When building SQL queries, aggregate, date, string, and window functions are most often used. In this article, we review each of these groups of functions in detail.

- Aggregate data using aggregate functions

- Process dates using date functions

- Use string functions to work with rows

- Perform calculations on subsets of data using window functions

.png)

.png)